The other major holiday project I worked on over the end-of-year break was to take my 3D engine and build something with it. I have a lot of little tech demos and ideas but nothing that’s actually “finished”.

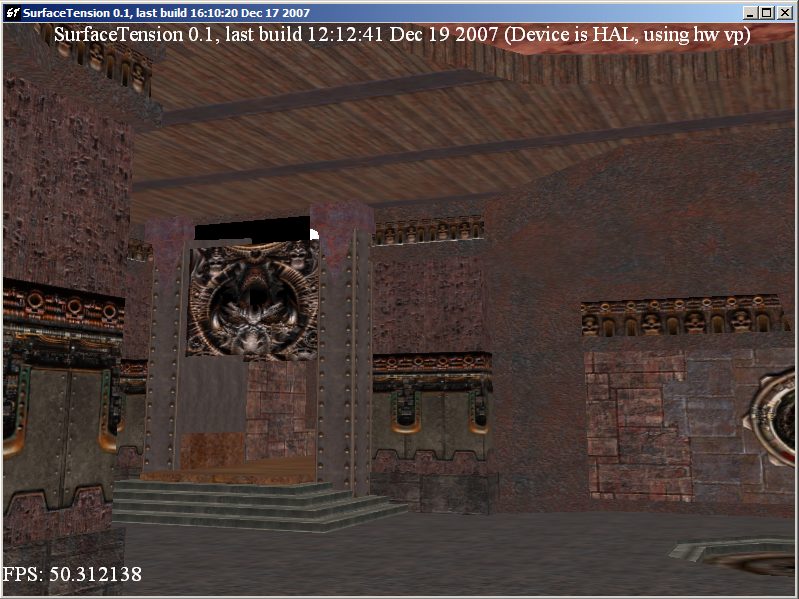

Many years ago, I worked on a 3D engine that ended up being capable of loading Quake 3 maps:

So I thought – why not do what I didn’t do in 2007 – actually build something that is more or less a clone of Quake 3?

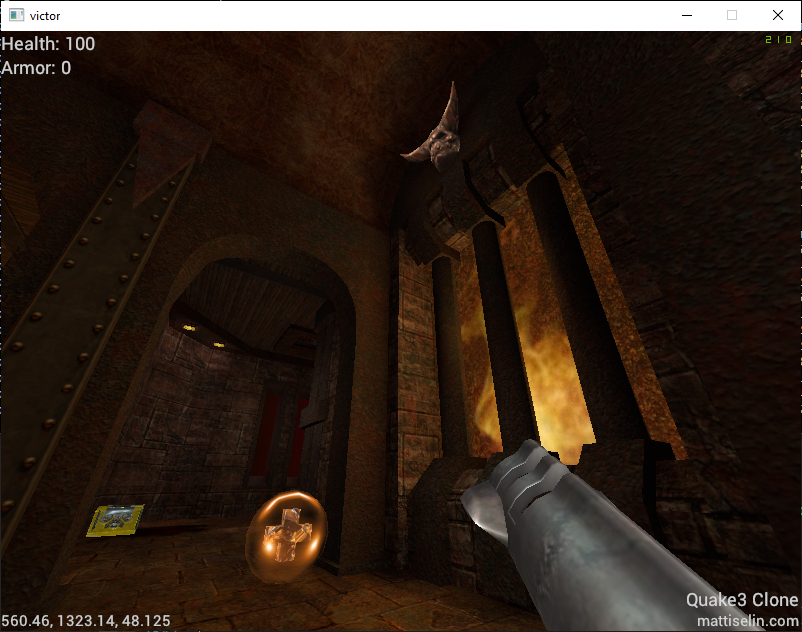

Well, I managed to get a little further than I did in 2007 to start with – with working lightmaps for example:

OK, but rendering a Quake 3 map is just scratching the surface of making a game. What’s more, Quake 3 BSP maps have more than just blocky rectangular geometry – they have Bezier surfaces to create curved geometry at runtime. Back then, this let id offer a geometry detail slider to let weaker computers reduce tessellation and get less-round round surfaces. Nowadays, that’s less of a big deal, so I thought I’d take it a little further.

Tessellation

Rather than write code to grab these Bezier surfaces (defined by 9 control points), and tessellate them at load time, I thought this would be a great opportunity to learn how to write OpenGL tessellation shaders.

It took a little while to wrap my head around the surfaces, which did involve drawing several example surfaces in a notebook (always keep a notebook near your keyboard!) and recognizing the characteristics of the control points and how they resulted in curved geometry.

Once I managed to figure that out, and load the control points in the correct order from the BSP file (again…. pen & paper), I was able to put together the shader:

// Tessellation evaluation shader.

layout(quads, equal_spacing, ccw) in;

vec3 bezier2(vec3 a, vec3 b, float t) {

return mix(a, b, t);

}

vec4 bezier2(vec4 a, vec4 b, float t) {

return mix(a, b, t);

}

vec3 bezier3(vec3 a, vec3 b, vec3 c, float t) {

return mix(bezier2(a, b, t), bezier2(b, c, t), t);

}

vec4 bezier3(vec4 a, vec4 b, vec4 c, float t) {

return mix(bezier2(a, b, t), bezier2(b, c, t), t);

}

void main()

{

float u = gl_TessCoord.x;

float v = gl_TessCoord.y;

// interpolate position

vec4 a = bezier3(tcs_out[0].UntransformedPosition, tcs_out[3].UntransformedPosition, tcs_out[6].UntransformedPosition, u);

vec4 b = bezier3(tcs_out[1].UntransformedPosition, tcs_out[4].UntransformedPosition, tcs_out[7].UntransformedPosition, u);

vec4 c = bezier3(tcs_out[2].UntransformedPosition, tcs_out[5].UntransformedPosition, tcs_out[8].UntransformedPosition, u);

vec4 pos = bezier3(a, b, c, v);

gl_Position = mvp * pos;

}The results worked out great, and it was very satisfying to use this as an opportunity to build out support in my 3D engine for tessellation shaders. The only shader type not yet supported is a Compute shader, and I’m hoping to dig into those soon too.

Quake 3 Shaders

Quake 3 (well, idTech 3) allow the use of their own scripted shaders to shade geometry. While many surfaces are textured with just an image (plus a lightmap), shaders allow for significantly more flexibility when rendering. For example, the flames on torches in maps are shaders that set up an animation across up to 8 image files – so with just two quads, a flickering flame can be created.

The shaders also support texture coordinate modification – based on constants or trigonometric functions – used to great effect to create moving skies, lava, or plasma electric effects by just rotating texture coordinates around.

Vertex deformation is even an option – more on that later.

Traditionally, these shaders would require multiple draw passes to merge the layers of the shader into one final visual result. I implemented this at first, but realized my 3D engine already supports “uniform buffer objects” (i.e. buffers of data to be passed to shaders), so I rebuilt my rendering path for Quake3 models and geometry. Now, the Quake3 shader is converted into a structure that contains all the information, texture stages, and other flags related to the rendering. That’s passed to the graphics card where the GLSL shader iterates through the list of stages and renders, performing blending between stages within the fragment shader itself.

The end result is a single draw call for geometry but with all of the same visual results! Combined with bindless textures, the result was a dramatic reduction in draw and bind calls, and no need to sequence multiple draw calls and OpenGL blending stages.

struct TCMod

{

int mode;

float p0;

float p1;

float p2;

float p3;

float p4;

float p5;

};

struct Stage

{

/*

* stage_pack0: packed bitfield with stage shader parameters. 4 bytes.

* alt_texture : 2 - alternative texture flag

* blend_src : 3 - source blend mode in this stage

* blend_dst : 3 - destination blend mode in this stage

* rgbgen_func : 3 - rgbgen function selector

* rgbgen_wave : 3 - rgbgen wave to use (if function is wave)

* tcgen_mode : 2 - tcgen mode selector

* num_tcmods : 3 - number of tcmods in use

*/

int stage_pack0;

// rgbgen wave params (if function is wave) - 16 bytes.

float rgbgen_base;

float rgbgen_amp;

float rgbgen_phase;

float rgbgen_freq;

// all our texture coord modification parameters

TCMod tcmods[4];

};

layout (std140) uniform Game

{

/*

* game_pack0: packed bitfield with common shader parameters. 4 bytes.

* num_stages : 3 - number of stages in this shader

* direct : 1 - avoid caring about blends and whatnot

* sky : 1 - rendering sky

* model : 1 - rendering a model (ignores lightmaps)

*/

int game_pack0;

layout(bindless_sampler) uniform sampler2D lightmap;

layout(bindless_sampler) uniform sampler2D textures[8];

Stage stages[8];

// additional transformations to apply beyond the model/vp matrices

// used for models attached to attachment points on other models

mat4 local_transform;

};I packed a number of the parameters into bitfields to reduce the size of the uniform buffer. I had visions of sending the GPU the entire selection of shaders in use and passing only a shader index in the draw call (or perhaps storing it as a vertex attribute), but I ultimately ran into the uniform buffer size limit on larger maps and decided to leave this idea alone.

Vertex Deformation

I mentioned vertex deformation earlier in this post.

Quake 3 shaders allow for vertex deformation, utilizing trigonometric functions to manipulate the vertices of the geometry in real time. This is used for things like flags. This seemed even more like a perfect place to use tessellation shaders – even more than the Bezier surfaces I mentioned above.

This was just a matter of implementing an alternative tessellation shader that would perform the deformations. In the code example below, “q3sin” and such are functions that just call the relevant trigonometric function, utilizing the parameters provided to correctly manipulate the result. In the original Quake 3 source, these were lookups in precomputed tables. Now, I’m just running the functions on the GPU.

if (wave == RGBGEN_WAVE_SIN) d_val = q3sin(base, amp, phase, freq);

if (wave == RGBGEN_WAVE_TRI) d_val = q3tri(base, amp, phase, freq);

if (wave == RGBGEN_WAVE_SQUARE) d_val = q3square(base, amp, phase, freq);

if (wave == RGBGEN_WAVE_SAW) d_val = q3saw(base, amp, phase, freq);

if (wave == RGBGEN_WAVE_INVSAW) d_val = q3invsaw(base, amp, phase, freq);

// deform along the normal

vertex_out.Position.xyz += vertex_out.Normal * d_val;This works pretty well:

Summary

There’s much more work to be done – I’ve been working on getting 3D models loaded and animated, building out the projectile logic so the game’s weapons work, and all that gameplay stuff that turns this from a cool handful of technical demo elements to a playable game.

The main reason I wanted to set this as a challenge for myself is that I can use the assets from the base game to be able to build the clone – I’m not a particularly great artist – and also have a point of reference along the way. At first I thought it was a little strange to build something that already exists, but the extent to which this project has already pushed the edge of my 3D engine and my own thinking about how to build 3D applications has been invaluable.

Here’s to being able to actually play the game soon, though 🙂